OM1 Develops Algorithm to Estimate EDSS Scores

Written by |

OM1 has created an artificial intelligence (AI)-based algorithm to estimate scores on the expanded disability status scale (EDSS), an established method for evaluating disability and disease progression in people with multiple sclerosis (MS).

The algorithm, using a method called machine learning, was trained to estimate EDSS scores from clinical records, an approach that was then validated using real-life MS patient data.

Using machine learning may help doctors to make better use of EDSS scores in clinical practice, which has been limited by the lengthy and complex nature of the scale.

“It is exciting to see a machine learning model that performs at this level based on real-world data,” Carl Marci, MD, one of the study’s authors, said in a press release. Marci is chief psychiatrist and managing director of mental health and neuroscience at OM1.

“Importantly, it can be easily applied to a neurologist’s clinical note — saving time for clinicians and adding valuable tracking information for patients,” Marci added.

The study, “Validation of a machine learning approach to estimate expanded disability status scale scores for multiple sclerosis,” was published in the Multiple Sclerosis Journal – Experimental, Translational and Clinical.

The EDSS is a well-established way of monitoring the course of MS. The scale looks at functional impairments across seven systems: pyramidal (muscle weakness, moving difficulties); cerebellar (coordination, balance); brainstem (speech, swallowing, eye movements); sensory (numbness, sensation loss); bowel and bladder function; visual function; and cognitive function.

Although widely applied in clinical trials, the use of the EDSS in clinical practice is more limited due to its time-consuming nature and the complexity of the scoring system, OM1 says.

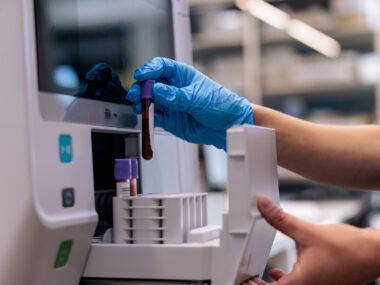

Researchers at OM1 now are using machine learning — a type of AI that uses large amounts of data to learn how to predict certain outcomes — to estimate EDSS scores from clinical records without a need for doctors to conduct the entire scale at length.

The PremiOM MS Dataset contains clinical information from more than 19,400 MS patients. In the study, researchers screened clinical notes from nearly 14,000 of these patients to identify those with enough available clinical information and clinician-rated EDSS scores in order to develop the algorithm.

Data from a subset of 684 patients were used to develop and validate the machine learning approach. Specifically, 2,632 clinical visits from 75% of the patients (513 people) were used as a “training cohort” that allowed the machine learning algorithm to learn how to predict EDSS scores from clinical charts.

The algorithm used clinical data including illness history, physical exams, review of systems, and clinical assessments to make its predictions.

“This rich, clinically relevant content allowed us to include features such as medication use, improvement or deterioration of disease, and assessments of pain that are not included in the traditional EDSS computation,” the researchers wrote.

Data from the remaining 25% of the patients (171 people), including 857 clinical encounters, then were used to test how well the algorithm worked — that is, whether the trained algorithm could predict an EDSS score that matched the one a patient received from their doctor.

Results showed the algorithm performed well overall, with an area-under-the curve (AUC) value of 0.91. Essentially, AUC values, ranging from 0-1, reveal how well an algorithm performs, with one being a perfect performance, and zero meaning it is wrong every time.

The model was applied to patients without a clinician-rated EDSS score on file, generating estimated scores for an additional 190,282 clinical encounters from 13,249 patients. The distribution of those estimated EDSS scores were similar to the scores from those patients in the validation group.

“Applying the model to real-world data sources has the potential to make these data sources more valuable for supporting research on MS disability and disease progression, monitoring treatment effectiveness, and improving patient outcomes,” the researchers wrote.

The team noted, however, that “the clinical notes used to train and validate the model are drawn from neurology practices in the United States and may not be reflective of documentation practices in other geographic locations or care settings.”

Leave a comment

Fill in the required fields to post. Your email address will not be published.